Vector Databases: The Backbone of Modern AI

In today’s data-driven world, over 80% of the data generated is unstructured, encompassing text, images, and audio. Efficiently managing and leveraging this vast amount of data is crucial for the advancement of artificial intelligence (AI) technologies. The exponential growth of unstructured data has created a pressing need for more sophisticated data management solutions that can handle the complexity and volume of this information.

Enter vector databases: a revolutionary solution tailored for handling unstructured data. Unlike traditional databases that excel with structured data, vector databases are designed to store, index, and search data in vector form, making them indispensable in the era of AI.

This article will take a look into the world of vector databases, exploring their fundamental concepts, highlighting their advantages, and demonstrating how they are transforming AI applications across various domains. From recommendation systems and search engines to natural language processing and computer vision, vector databases are becoming a cornerstone technology that powers advanced AI solutions.

What are vector databases?

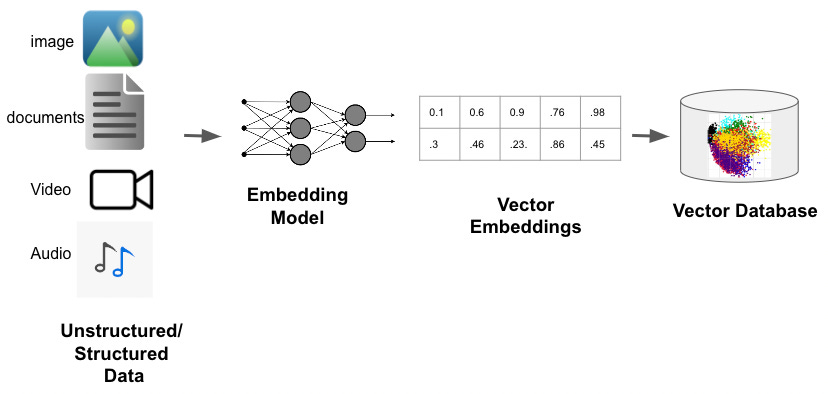

Vector databases are a type of database designed to handle unstructured data by storing and managing it in vector form. Unlike traditional databases that store data in rows and columns and are optimized for structured data, vector databases excel at managing data types such as text, images, and audio. They utilize high-dimensional vectors to represent the data, enabling efficient similarity searches and precise data retrieval.By using similarity metrics instead of exact matches, vector databases allow ML or AI models to understand data contextually and enhance their ability to recall previous inputs, thus powering applications such as search, recommendations, and text generation.

Key Concepts: Vectors, Embeddings, and Their Use in Representing Data

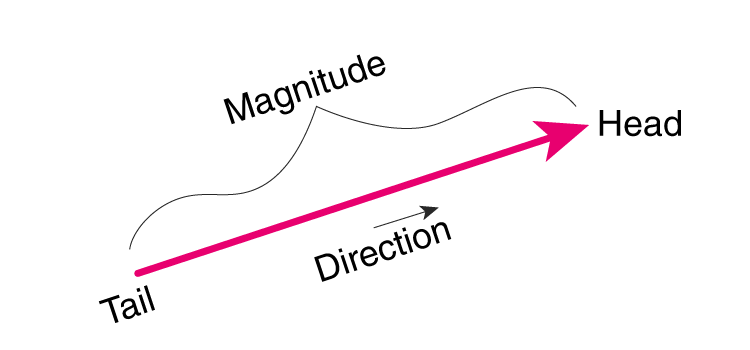

Vectors: Vectors are mathematical objects that have both magnitude and direction. In the context of data, vectors are used to represent data points in a multi-dimensional space. Each dimension corresponds to a specific feature of the data. For example, a vector could represent a word, an image, or a piece of text, with each dimension capturing some aspect of its meaning or characteristics. The position of the vector in this multi-dimensional space encodes information about the data point.

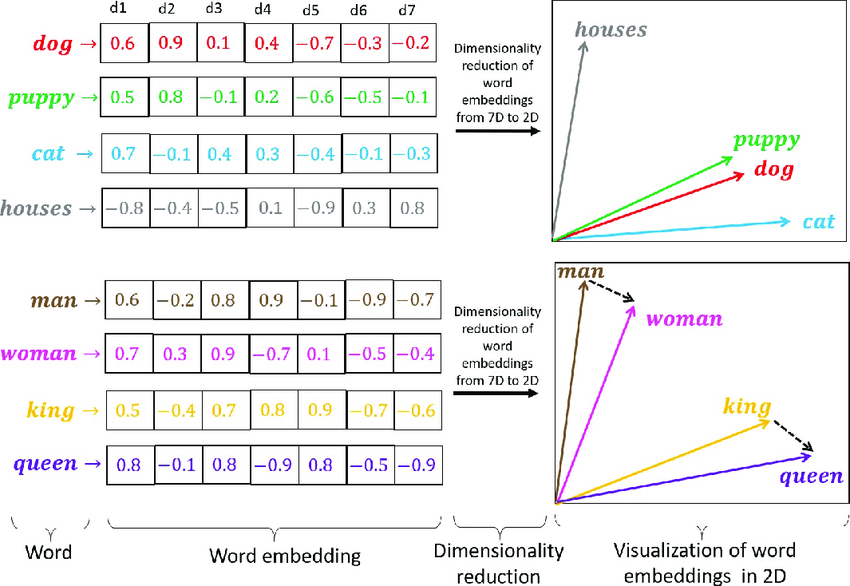

Embeddings: Embeddings are specific types of vectors that are used to capture the semantic meaning of data. They are learned representations, often generated through machine learning models, that map high-dimensional data into lower-dimensional spaces while preserving meaningful relationships. In natural language processing (NLP), embeddings like Word2Vec, GloVe, or BERT transform words or sentences into vectors. These vectors reflect the contextual and semantic similarities between different words or phrases. For instance, words with similar meanings will have vectors that are close to each other in the embedding space.

How They Are Used to Represent Data: Vectors and embeddings are powerful tools for representing complex data in a way that machines can understand and process. Here’s how they are typically used:

Text Data: In NLP, words, sentences, or entire documents are converted into vectors. These vectors capture semantic similarities, enabling applications like search engines, chatbots, and language models to understand and generate human language more effectively. For example, the word “king” might be represented by a vector close to the vector for “queen,” reflecting their semantic similarity.

Image Data: In computer vision, images are converted into vectors using techniques such as convolutional neural networks (CNNs). These vectors capture features like shapes, colors, and textures. This allows for tasks like image recognition, object detection, and similarity searches. For instance, a vector representing a cat image will be close to other cat images in the vector space.

Audio Data: Audio signals can be represented as vectors using techniques like spectrograms or Mel-frequency cepstral coefficients (MFCCs). These vectors capture features of the audio signal, enabling applications such as speech recognition, music recommendation, and audio classification.

General Data: Any type of unstructured data can be converted into vectors, enabling machine learning models to process and analyze it. This includes data from sensors, user interactions, and more.

By converting data into vectors and embeddings, vector databases can efficiently store, index, and search through large volumes of unstructured data. This capability is essential for many AI applications, where understanding the relationships and similarities between data points is crucial.

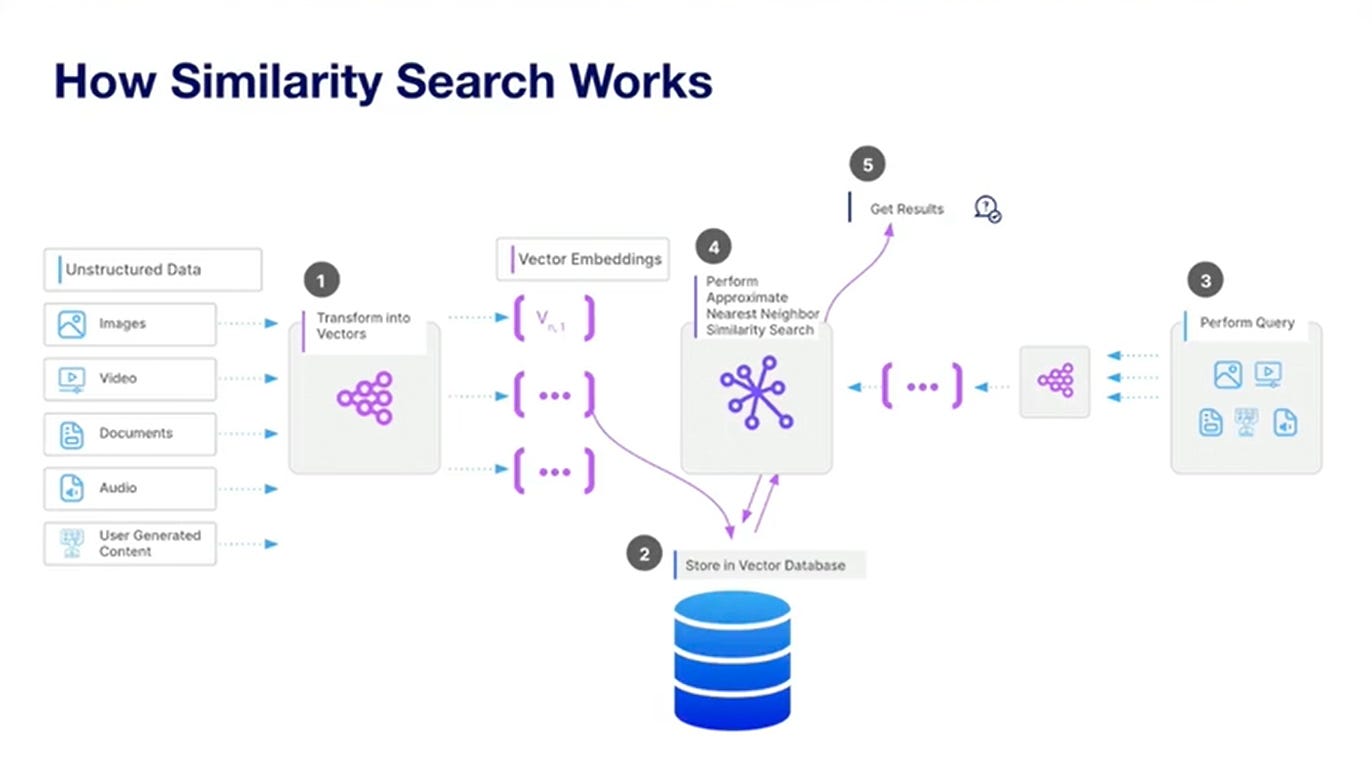

How Vector Databases Work: Querying with Indexing and Similarity Search

Vector databases are designed to handle complex queries efficiently by using specialized indexing and similarity search techniques. Here’s a detailed look at how this process works:

Data Transformation and Storage

Before querying can happen, data must be transformed into vectors. This transformation is done using various embedding techniques, depending on the type of data. For example:

Text data might be processed with NLP models like Word2Vec or BERT.

Image data could be processed using convolutional neural networks (CNNs).

Audio data might be transformed using spectrograms or MFCCs.

Once transformed, these vectors are stored in the vector database.

Indexing

Indexing is a critical component that enables fast and efficient querying in vector databases. Traditional indexing methods like B-trees or hash tables are not suitable for high-dimensional vector spaces. Instead, vector databases use specialized indexing structures. Some common indexing methods include:

KD-Trees: A space-partitioning data structure that divides the data into k-dimensional tree nodes. While efficient for low-dimensional data, KD-trees become less effective as dimensions increase.

R-Trees: These are used for indexing multi-dimensional information such as geographical coordinates. They group nearby objects and represent them with their minimum bounding rectangle.

Hierarchical Navigable Small World (HNSW) Graphs: A more advanced method that constructs a graph of vectors where each node is connected to its nearest neighbors. HNSW allows for fast approximate nearest neighbor searches, which are highly efficient even in high-dimensional spaces.

Querying with Similarity Search

When a query is made, the goal is to find vectors in the database that are similar to the query vector. This is done using similarity search, which involves the following steps:

Query Vector Transformation: The query input is transformed into a vector using the same embedding technique used for the stored data.

Index Search: The query vector is compared against the indexed vectors to find the most similar ones. This is where the indexing method plays a crucial role in speeding up the search process. For example, in an HNSW graph, the search algorithm will traverse the graph to find nodes (vectors) that are closest to the query vector.

Distance Metrics: Similarity is typically measured using distance metrics such as:

Euclidean Distance: Measures the straight-line distance between two points in a vector space.

Cosine Similarity: Measures the cosine of the angle between two vectors, which helps to determine how similar the orientations of the vectors are.

Manhattan Distance: Measures the sum of the absolute differences of their coordinates.

Nearest Neighbors Retrieval: Based on the distance metric, the nearest neighbors (most similar vectors) to the query vector are retrieved. These are the data points that are most relevant to the query.

Result Processing: The retrieved vectors are processed and presented as results. In applications like recommendation systems, this might involve displaying items similar to the user’s query.

Efficiency and Approximation

To enhance efficiency, especially with large datasets, vector databases often use approximate nearest neighbor (ANN) search algorithms. These algorithms provide a trade-off between accuracy and search speed, delivering results that are nearly as good as exact searches but much faster. HNSW is a popular ANN algorithm used for this purpose.

By leveraging these advanced indexing and similarity search techniques, vector databases can perform rapid and accurate queries, making them ideal for AI applications that require real-time data retrieval and analysis. This capability is essential for powering functionalities like personalized recommendations, image and video search, and natural language understanding.

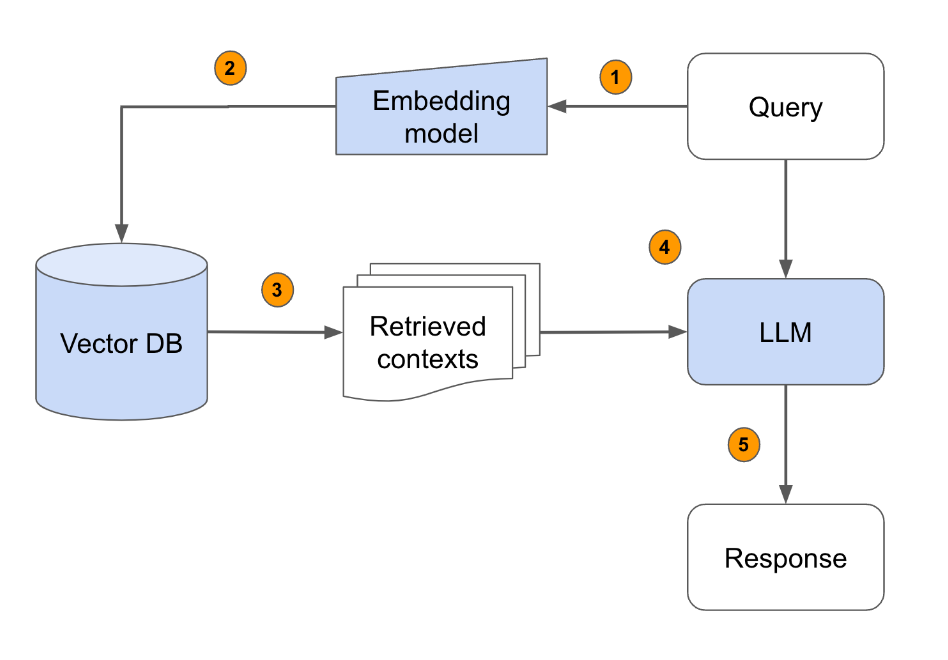

Integration with AI: How Vector Databases Enhance Machine Learning and AI Models

Real-Time Data Retrieval

AI applications often require real-time data retrieval to function effectively. Vector databases enable this through efficient similarity search algorithms. For example:

Recommendation Systems: When a user interacts with a platform, the AI model queries the vector database to find similar items based on the user’s preferences, providing real-time recommendations.

Search Engines: Vector databases enhance search engines by allowing them to retrieve results that are contextually similar to the query, rather than just exact keyword matches. This improves the relevance of search results.

Chatbots and Virtual Assistants: These systems use vector databases to understand and generate responses based on the context of the conversation, making interactions more natural and meaningful.

Training and Inference

Vector databases also play a significant role in the training and inference phases of machine learning models:

Training Data Management: During the training phase, models often require access to large datasets. Vector databases store these datasets as vectors, allowing models to efficiently retrieve and use them during training. This is particularly useful for tasks like image classification, where models need to access and process vast amounts of image data.

Feature Engineering: Machine learning models benefit from feature vectors stored in vector databases, which can be used to enhance the feature engineering process. By retrieving relevant feature vectors, models can be trained more effectively.

Model Inference: During inference, when the model is making predictions based on new data, vector databases provide the necessary context by quickly retrieving similar data points. This improves the accuracy and relevance of the predictions.

Enhancing AI Model Capabilities

Vector databases significantly enhance the capabilities of AI models in several ways:

Contextual Understanding: By storing data as vectors that capture semantic meaning, AI models can better understand the context and relationships between different data points. This is crucial for applications like language models and recommendation systems.

Scalability: Vector databases are designed to handle large volumes of unstructured data, making it easier to scale AI applications. They provide efficient indexing and retrieval mechanisms that can manage growing datasets without compromising performance.

Flexibility: The ability to handle diverse data types (text, images, audio) makes vector databases versatile. AI models can leverage this flexibility to work with various forms of data, enhancing their functionality and applicability.

Continuous Learning: As new data is generated and embedded, vector databases can be updated, allowing AI models to continuously learn and adapt to new information.

Challenges and Considerations

Implementation Complexity

Implementing and maintaining vector databases can be challenging due to several factors:

Technical Expertise: Setting up and optimizing vector databases requires specialized knowledge in machine learning, data indexing, and vector mathematics.

Integration: Seamlessly integrating vector databases with existing systems and AI models can be complex, necessitating custom solutions and potential changes to infrastructure.

Scalability: As data grows, maintaining the performance and efficiency of vector databases can become increasingly difficult, requiring ongoing optimization and scaling efforts.

Cost

The cost implications of adopting vector databases can be significant:

Initial Investment: Setting up vector databases involves purchasing or licensing software, hardware costs, and possibly hiring experts, leading to high initial expenses.

Operational Costs: Running and maintaining vector databases incurs continuous costs, including cloud storage, compute resources, and regular maintenance.

Training and Development: Ongoing training for staff and development of custom solutions can add to the overall cost, especially as technology evolves and new features or optimizations are required.

Data Privacy

Data privacy and security are critical considerations when implementing vector databases:

Sensitive Data: Storing sensitive information in vector form requires robust security measures to prevent unauthorized access and data breaches.

Compliance: Ensuring compliance with data protection regulations (such as GDPR, CCPA) can be complex, requiring careful management of data storage, processing, and access controls.

Encryption: Implementing encryption for data both at rest and in transit is essential to safeguard against potential security threats, but it can also add to the complexity and cost of maintaining the database.

By carefully addressing these challenges and considerations, organizations can effectively leverage the power of vector databases while managing the associated risks and costs.

Future of Vector Databases in AI

The future of AI vector databases shows promise, marked by key trends and innovations like scalability, efficient indexing, deep learning framework integration, multi-modal data support, privacy enhancements, and explainability. Integration with edge computing will enable real-time data processing, while advancements in indexing algorithms and hardware acceleration will enhance scalability and performance. Automation tools will simplify data management, and interoperability standards will ensure seamless integration with AI frameworks. Increasing adoption across sectors will fuel innovation, bolstered by emerging technologies such as quantum computing, federated learning, and advanced privacy techniques. Adaptive indexing algorithms and hybrid storage solutions will further enhance the efficiency and flexibility of vector databases, solidifying their role in advancing AI capabilities.